Using pre-push extensions

Using pre-push extensions

After you create a Crawling Module source, you may need to customize the way source items are indexed. One way to do this is to use an extension, a Python script that you write and that runs for every item crawled by your source.

Coveo lets you apply extensions at two distinct stages of the indexing process:

-

On the Crawling Module host: This type of extension is called a pre-push extension and is the topic of this article. A pre-push extension is useful when you want to leverage data that’s only available on your server to customize content indexing.

-

After your content is pushed to the Coveo Platform: This type of extension is called an indexing pipeline extension (IPE). For details, see Indexing Pipeline Extension Overview.

This article details how to create and apply a pre-push extension to a Crawling Module source.

|

|

|

Apply a pre-push extension to a source

To apply a pre-push extension to a Crawling Module source:

-

Write a Python script that implements the logic you want to apply to crawled items.

-

Reference the script in the

PrePushExtensionparameter of your source’s JSON configuration.Instructions to set the

PrePushExtensionparameter-

On the Sources (platform-ca | platform-eu | platform-au) page, click the desired source, and then click More > Edit configuration with JSON in the Action bar.

-

Click the

Parameterstab located above the JSON configuration box. -

Add a comma (

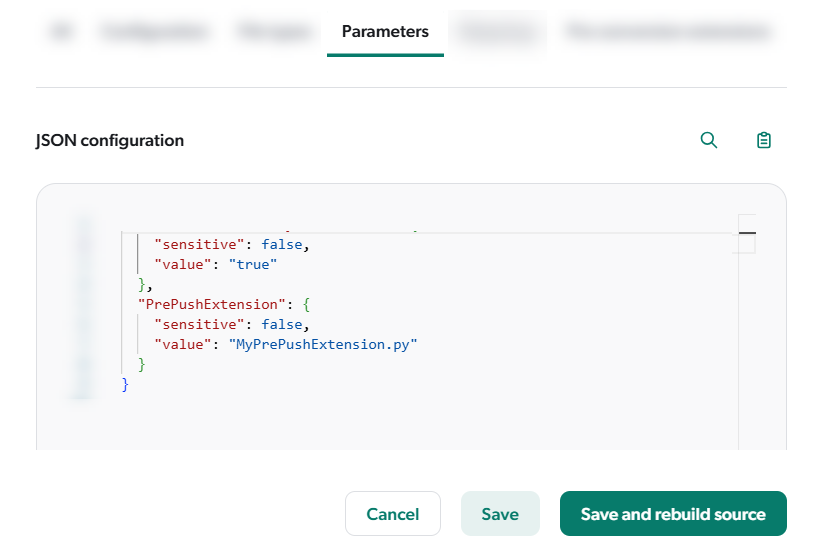

,) after the last parameter configuration, and then add thePrePushExtensionparameter configuration with the value set to your script file name. For example, if your script file name isMyPrePushExtension.py, you must add the following to theparameterssection of your source JSON configuration:"PrePushExtension": { "sensitive": false, "value": "MyPrePushExtension.py" }The bottom of the JSON configuration box should now be similar to the following:

-

-

Add your script’s external dependencies to the

requirements.txtfile in theC:\ProgramData\Coveo\Maestro\Python3PrePushExtensionsfolder. -

Implement logging in your script, ideally to a subfolder under

C:\ProgramData\Coveo\Maestro\Logs, to help with debugging. See the provided script examples for logging logic. -

Allowlist https://pypi.org in your security solution to enable the download of required Python modules.

Write the Python script

A pre-push extension script must meet these requirements:

-

It must be a Python 3 script.

-

Save it in the

C:\ProgramData\Coveo\Maestro\Python3PrePushExtensionsfolder (ProgramDatais hidden by default). -

Define a

do_extensionfunction that accepts thebodyargument and returns the modifiedbody. This argument is a JSON representation of the crawled item, for example:{ "DocumentId": "file:///c:/tmp/testdata/sample.txt", "CompressionType": "ZLIB","CompressedBinaryData": "eAELycgsVgCiRIWS1OISPQAplwUk",

"clickableuri": "file:///C:/Tmp/TestData/sample.txt", "date": "2022-05-04 15:07:39", "Permissions": [{

"PermissionSets": [{ "AllowAnonymous": false, "AllowedPermissions": [{ "IdentityType": "GROUP", "SecurityProvider": "Email Security Provider", "Identity": "*@*", "AdditionalInfo": {} } ] } ] } ], "fileextension": "txt", "connectortype": "FileCrawler", "source": "File", "collection": "File Collection", "generateexcerpt": true, "contenttype": "", "originaluri": "file:///c:/tmp/testdata/sample.txt", "printableuri": "file:///C:/Tmp/TestData/sample.txt", "filename": "sample.txt", "permanentid": "9a3a317a4e49c31962b969967c15e51477b0fd9ca33dceac76c94982593b", "size": 15, "compressedsize": 21, "creationdate": "2022-05-04 15:07:23", "lastaccessdate": "2023-07-04 19:07:49", "folder": "C:\\Tmp\\TestData", "fileowner": "COVEO\\Bob", "lastwritedate": "2022-05-04 15:07:39", "parents": "<?xml version=\"1.0\" encoding=\"utf-16\"?><parents><parent name=\"C:\" uri=\"file:///C:/\" /><parent name=\"Tmp\" uri=\"file:///C:/Tmp/\" /><parent name=\"TestData\" uri=\"file:///C:/Tmp/TestData/\" /><parent name=\"sample.txt\" uri=\"file:///C:/Tmp/TestData/sample.txt\" /></parents>", "coveo_metadatasampling": 1 }

To modify item data, base64-encode and compress the content, then set the CompressionTypeandCompressedBinaryDataproperties. See the Add item data example.Avoid modifying the Permissionsproperty using a pre-push extension, as it may allow unauthorized access to content in your search interface.

|

|

The properties in the |

The following shows a simple pre-push extension script template:

# Import required Python libraries.

import sys

...

# Set up logging or other initial configurations.

log_folder = os.path.join(os.getenv('COVEO_LOGS_ROOT'), 'Extensions', os.getenv('SOURCE_ID','unknown'))  ...

# ------------------------------------------------------------------------

# Entry point for the extension. The do_extension function must be defined.

# ------------------------------------------------------------------------

def do_extension(body):

# Apply transformation logic and log actions.

...

return body

...

# ------------------------------------------------------------------------

# Entry point for the extension. The do_extension function must be defined.

# ------------------------------------------------------------------------

def do_extension(body):

# Apply transformation logic and log actions.

...

return body| The Coveo Crawling Module sets environment variables you can access in your script. |

An extension script runs automatically during a source content update. You can apply only one pre-push extension script per source. However, that script can call multiple functions, including those in other Python files.

Coveo provides sample scripts covering common use cases. Use them as templates to build your own.

Coveo pre-push extension environment variables

The Crawling Module sets the following environment variables:

| Variable | Description |

|---|---|

|

Root folder for logging (usually |

|

The unique identifier of your Coveo organization.

For example, |

|

The unique identifier of the source currently being processed.

For example, |

|

Type of content operation currently being performed.

For example, |

|

Unique identifier of the current operation.

It’s displayed in the activity details on the Activity Browser (platform-ca | platform-eu | platform-au) page.

For example, |

|

Unique identifier of the Crawling Module.

It’s displayed on the Crawling Modules (platform-ca | platform-eu | platform-au) page.

For example, |

Precautions when using pre-push extensions

Extensions can affect the performance of your source crawling. If a script runs too long or encounters errors, items will be indexed without applying the script, which may cause unexpected results in your search interface.

|

|

Leading practice

Apply the extension to a duplicate of your production source with a name that clearly indicates it’s for testing purposes only. In this test source, crawl only a small subset of content for faster debugging and to limit the log file size. Only after fully testing and validating the pre-push extension in the test source should you apply it to your production source. |

What’s next?

Review the pre-push extension examples to start writing your own extensions.